Top Ten Libraries of Python for Data Science

Summary

This blog explores essential Python libraries for data science, including NumPy for mathematical operations, Pandas for data wrangling, SciPy for scientific computations, Matplotlib for data visualization, Scikit-Learn for machine learning, TensorFlow for neural networks, Keras for high-level neural network API, Theano for mathematical expression evaluation, PyTorch for deep learning, and Seaborn for enhanced data visualization.

Table of Content

The rate at which information is being generated in the world is exponential. This ever-increasing volume of information is referred to as “Big Data”. From academic institutions and large corporations and governments to just individuals - everyone has a lot of data, from servers, and hard disks to smartphones.

Is there anything we can learn from this historical data? Yes, and an entire branch of study has sprung up that is dedicated to finding ways and means to sift through and analyze large amounts of data in order to derive meaning from it.

The field is Data Science. It is precisely this demand that data scientists meet.

You may also like: How to become a data scientist in 2020

Python for Data Science

Data Scientists are not just researchers with an in-depth understanding of their domain. If you are interested in becoming a data scientist, be sure to check out this comprehensive video. Along with this domain knowledge, they are also well versed in programming (Here's the perfect parcel of information to learn data science).

One of the staple programming languages used in Data Science in Python and it has been around since 1991. There are a few good reasons as to why python became the choice for the field.

-

Python has a readable and maintainable code

-

Python is compatible with Windows, Linux, and Mac

-

Python contains many robust libraries for data science

-

Minimum coding in comparison to other major programming languages

-

An easy language to learn for data science, specifically for beginners

Overall it is easier than C, C++, and Java and more comprehensive than R or MATLAB.

Now let us discuss the common libraries that data scientists use in their day-to-day work to solve their problems.

Anaconda

Before we get started, I'd really like to talk about the Anaconda!

Anaconda is a versatile and powerful data science platform (also consider checking out this perfect parcel of information for a data science degree). It comes with many libraries required to perform data science, mathematics, or engineering work. It comes with the Spyder IDE and Jupyter notebook. Anaconda really makes everything so much easier, especially or beginners, and quite a few of the libraries I will are included in the Anaconda installation.

You can download anaconda from here: https://www.anaconda.com/distribution/

1. Numpy

NumPy is short for numeric python. It finds extensive use in mathematical, scientific, engineering, and data science applications (also consider checking out this career guide for data science jobs). NumPy helps perform operations such as reshaping, slicing, complex mathematical operations, and statistical analysis.

Many other packages such as SciPy, Matplotlib, Scikit-learn, Pandas, etc. rely on NumPy to some extent for their functionalities. NumPy’s statistical analysis and linear algebra modules make NumPy an indispensable library in a data scientist’s toolkit. It provides many exceptional features to execute mathematical and logical operations on arrays and matrices.

While Python provides similar features through list functions, NumPy has some advantages over Python's list. NumPy takes less memory than a list for a similar analysis. It is faster. It is convenient. A detailed difference between the two can be found here.

Here is the GitHub repository of NumPy.

Downloading NumPy: NumPy comes with Anaconda. However, it can be downloaded by using the command conda install NumPy

2. Pandas

If you want to make your life easy as a data scientist, use Pandas. It is an open-source and widely used python library for data wrangling. Pandas is short for Python Data Analysis Library. Pandas is written in C and python. Pandas is used to analyze data either as a series (1-D array) or in the form of data frames (2-D array).

Like many other libraries, Pandas is also built on top of NumPy.

Pandas has functionalities that make everything from data creation, to data manipulation, to data wrangling easy to implement - and efficiently too. It is also widely utilized in time series data analysis. Pandas has a slew of functions which help to -

-

Handle missing data

-

Read text, csv, excel or many other files for data analysis

-

Merge files, concatenation of data, slicing of data or reshaping data

-

Create python object containing rows and columns from SQL database

Downloading Pandas: Pandas comes with Anaconda. However, it can be downloaded by using the command conda install pandas

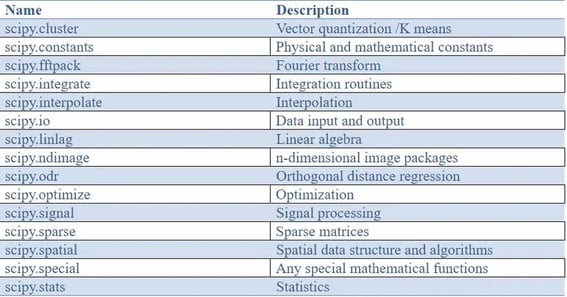

3. SciPy

SciPy is short for scientific python. It is an open-source library for science, engineering, and mathematics. In comparison to NumPy, it has more features for scientific computations.

That said, the SciPy library depends on the NumPy library for N-dimensional array functions. One major advantage of SciPy is that it has many sub-packages corresponding to a diverse range of applications. Take a look at the list below :

Downloading SciPy: SciPy comes with Anaconda. However, it can be downloaded by using the command conda install scipy

4. Matplotlib

The most interesting thing as a data scientist is to present your data to an audience. The audience could be your colleagues, your boss or some researchers in a research dissemination event. Matplotlib assists in visualizing our complex data. Hence, this library is an essential library of any data scientist. The pictorial format of data presentation is the best format in data science. Here are some reasons:

-

Visualization makes complex quantitative data interpretation easier.

-

Helps to explore big data and its analysis

-

Helps to understand possible trends in data

-

Helps to make decisions in a company for any required improvement

-

It helps to understand if there is a relationship between variables.

There are many more things to understand for data visualization which can be explored. Like other libraries, Matplotlib is also built on NumPy. It helps us to generate line plot, histogram, scatter plot, area plot, pie chart, bar plot, box plot, 3D plot, image plot, contour plot, stack plot, etc. Matplotlib also provides many other formatting scripts to easily create, and customize the figures.

Downloading Matplotlib: Matplotlib comes with Anaconda. However, it can be downloaded by using the command conda install matplotlib

5. Scikit-Learn

This is an indispensable package in the ML Engineer's toolkit. Scikit-learn is also known as sklearn. It is the flexibility of sklearn that makes it stand out amongst other machine learning packages. Especially of help to beginners, this library provides a high-level interface for many ML tasks.

Again, it is built on top of NumPy and also supports many other packages such as SciPy. You can use sklearn for many ML approaches such as regression, classification, clustering, dimensionality reduction, model selection, etc.

You can perform many ML algorithms such as support vector regression, decision tree regression, random forest regression. It has classification and clustering algorithms such as KNN, SVM, kernel SVM, naïve bayes, decision tree classification, random forest classification, K-means clustering, Hierarchical clustering, PCA, LDA, kernel PCA etc.

Downloading Scikit learn: Scikit learn comes with Anaconda. However, it can be downloaded by using the command conda install scikit-learn

6. TensorFlow

Tensorflow made learning artificial intelligence (AI) easier. It is an open-source platform for neural networks. It was developed by the Google Brain team. Although it was initially developed to perform ML and deep neural network research in Google, it is widely used in many fields for ML and deep learning.

Its code can run on many platforms such as CPU, GPU and TPU. With TensorFlow, one can easily set up artificial and convolutional neural networks.

Here are a few applications that TensorFlow finds usage in -

-

Voice and sound recognition

-

Text-based applications

-

Image recognition

-

Time series analysis

-

Video detection.

Details about these utilized Tensorflow cases are elucidated here.

Downloading TensorFlow: As Anaconda does not come with TensorFlow library, it can be downloaded using the command conda install –c conda-forge TensorFlow

7. Keras

Keras is a neural network library that is built on top of Tensorflow, R, Theano and Microsoft cognitive toolkit. It is an open-source and high-level neural network API which is written in python. From their github page, it is clear as to what the creators of this library is based upon: “being able to go from idea to result with the least possible delay is key to doing good research.”. Hence, the advantage of Keras is that it generates quick results of experiments for neural networks.

However, in comparison to Keras, TensorFlow utilizes low levels of API. Some guiding principles of Keras are user-friendliness, modularity, easy extensibility and ability to work with python.

Keras is specifically useful for the following purposes -

-

For quick and easy prototyping.

-

Using a deep learning library that supports convolutional and recurrent networks or both.

-

If deep learning library runs both on CPU and GPU

More detail about Keras and its related functions can be obtained from here.

Downloading Keras: As Anaconda does not come with Keras library it can be downloaded by using the command conda install –c conda-forge keras

8. Theano

Theano is an open-source built on top of NumPy library for python. Mathematical analysis of multi-dimensional array is not straight forward. Theano provides an efficient evaluation of these mathematical expressions. Theano has many features and can be explored here, however, some of these features are mentioned below:

-

Tight integration with NumPy

-

Transparent use of GPU

-

Efficient symbolic differentiation

-

Speed and stability optimizations

-

Dynamic C code generation

-

Extensive unit-testing and self-verification

Downloading Theano: As Anaconda does not come with the Theano library, it can be downloaded by using the command: conda install –c anaconda theano

9. PyTorch

PyTorch is another major open-source library for machine learning. It is based on the Torch library and used for many applications such as natural language processing and computer vision. This library was developed by Facebook's AI Research lab (FAIR). It provides two high-level features:

-

Tensor computing (like NumPy) with strong acceleration via graphics processing units (GPU)

-

Deep neural networks built on tape-based auto diff system

Many features of the PyTorch library are explained here. At a granular level, it consists of the following components: torch, torch.autograd, torch.jit, torch.nn, torch. multiprocessing and torch.utils. Two scenarios where PyTorch is the go-to library are -

-

As a replacement for NumPy to use the power of GPUs

-

A deep learning research platform that provides maximum flexibility and speed.

Downloading PyTorch: It can be downloaded by using the command conda install –c peterjc123 pytorch

10. Seaborn

Although Matplotlib is the most commonly used library for data visualization, Seaborn provides an API on top of Matplotlib. It offers many different choices of plotting style, grid, and colors. It can be used in conjunction with Pandas' Data Frames. Seaborn provides a better plot compared to other python libraries, including Matplotlib.

It also addresses common data visualization needs, such as mapping colors to specific variables or faceting. Seaborn is the equivalent of the ggplot2 package in R, but for Python.

Downloading Seaborn: Seaborn learn comes with Anaconda. However, it can be downloaded by using the command conda install seaborn

Important Mentions

There are a few other specialized libraries I'd like to mention. Scrapy and BeautifulSoup are two good libraries for data mining. These libraries are commonly used for web crawling and data scraping.

XGBoost is a library that specifically offers a framework for gradient boosting for ML analysis. XGBoost can also be run on Hadoop, SGE, and MPI.

If you're looking for a library for natural language processing, then check out NLTK. It has modules that deal with everything ranging from text processing to semantic reasoning.

Another useful data visualization library is Plotly. It works very well with interactive web applications.

Did we miss out on your favorite library? Let us know in the comment section what your favorite library is and why.

Kickstart your Data Science career with OdinSchool's Online Data Science Course. Talk to a career counsellor today!