Pros & Cons of Cloud Computing: How to work around them?

Summary

Based on a user or organization's needs, many cloud infrastructure works. One of the key models which many organizations have figured out over time is to have a hybrid cloud strategy. A system where your own engineers manage parts of your components and thus giving you unlimited control. We also offload parts of the system to the cloud.

We use this mix and match of components and architecture evolution in the industry. A very viable solution for any small or large organization to make the best use of both worlds.

Table of Content

In the 21st century, we are building digital infrastructure at a pace like never before. Capabilities, ecosystem, infrastructure, programming languages have all evolved at an unprecedented pace.

These days we have several options and newer trends showing up every few weeks. It requires a modern day computer science warrior to have an in-depth understanding of how software systems evolve.

One of the ways to do so is by comparing across the ecosystem. In this blog, we ask ourselves from first principles as software engineers what model or version of computing do we use (model+infrastructure+services) (here's some resource to help you navigate through the types of cloud services). Should we choose as we build applications for use at scale?

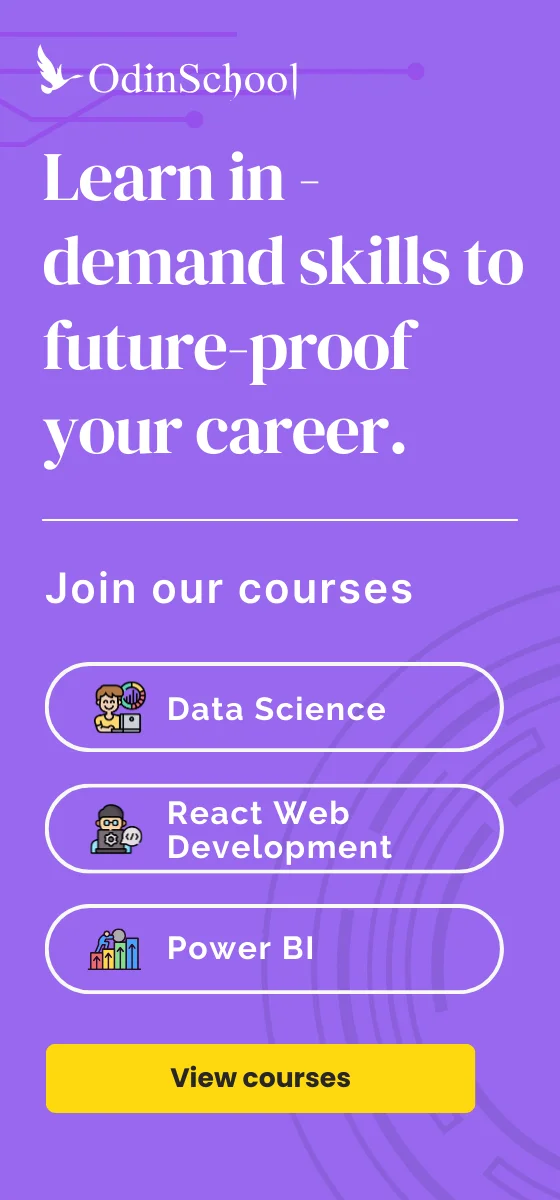

Image Source: Emergence Capital Partners

Who is the Target Audience?

Companies and individuals are contemplating a move to select cloud or non-cloud architectures. They need the architectures which are most suitable for their software application development. Individuals are seeking to have a broad view of the cloud ecosystem and its nuances.

What is Cloud Computing?

Cloud computing is a capability provided to users as an abstraction over computing resources, infrastructure, services, and platforms. It’s a plug-and-play style of accessing computing resources and services which the 3rd party providers like:

-

Amazon

-

Google

-

Microsoft, and

-

Many other local providers.

This enables end users to build and scale applications globally.

Types of Cloud Computing Architectures

There are several broad categories of cloud computing architectures available. One can add them into categories such as:

-

IAAS (Infrastructure as a service)

-

PAAS (Platform as a service)

-

SAAS (Software as a service)

-

DAAS (Development and Data as a service)

These vary from each other and we can combine (mix and match). This serves the needs of applications from front end to backend systems. There is also an interplay between all these systems. Each one depends on one another and we offer them to the end user to choose as per their needs. So let's begin by listing the pros/cons of using a cloud-first architecture. How it affects your company's bottom line, scale, reach and usage patterns.

You may also like: Cloud Computing – The Global Standard Of Internet Business

Pros of Cloud Computing

1. Cost Control

As a small or medium-sized company [1- 200 people] or an individual, one of the most pressing needs for an enterprise is to cut costs. Reaching out to the largest possible audience in a technology play is also important. Cloud provides users an opportunity to access multi-data-center, compute and storage infrastructure. Cloud providers manage with a guarantee of a 99% uptime. They also take care of low maintenance costs.

You save on the costs of hiring and setting up a team of engineers to maintain the software/hardware infrastructure. This would break into the development costs of the product. This makes the actual application development, marketing etc. suffers due to insufficient funds. Also, hardware upfront costs and stitching together an open source software while making them useful for your application can be cumbersome as well as non-maintainable without enough resources.

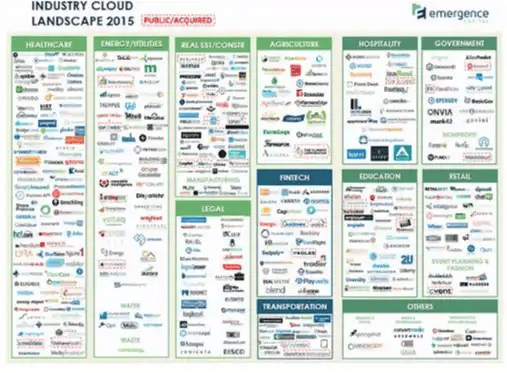

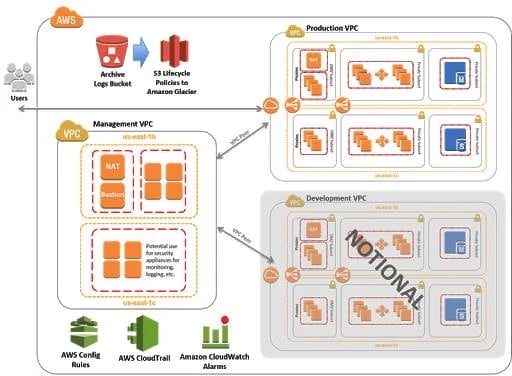

Image Source: AWS Case Study

Image Source: https://www.slideshare.com

2. Ease Of Hosting & Deployment Across Geographies

One of the key things for a viable product is for it to reach out to a large number of users and have global scale. We then get effective feedback for developing it further. If you wish to set up a software first solution without cloud providers, there are challenges. One is the constraint of managing software/hardware resources across geographies. Second is dealing with different time-zones. Dealing with laws and regulations, language, setting up a deployment pipeline and networking costs etc. to name a few.

All these are currently taken care of by large cloud providers. Hence they make deploying systems, software across the world very easy.

Image Source: https://aws.amazon.com

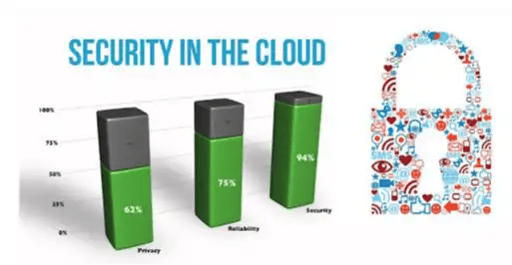

3. Security

One of the key things with any application or services is to have bulletproof security built around it. Setting up security, authentication, encryption, hardware and software level security, firewalls and several proprietary shields is expensive and requires expertise that is very hard to find. All these are available as paid services in a plug and play architecture inside cloud providers. Cloud providers have vast and very specialized resources dedicated to building these services. They provide the high degree of security and encryption across systems in their ecosystems. A lot of these clouds are also called private clouds.

Examples -: AWS GovCloud (a private cloud service where the US Department of defense and NASA store and work on sensitive content).

Image Source: Orgspring.com

4. Maintainability and Auditing

A vital and key component of digital infrastructure ( software/ hardware) is how easy it is to maintain it with a near 100 percent uptime. Managing large clusters of hardware resources across geographies requires a very skilled and efficient workforce with access to excellent monitoring tools. These are all out of the box with cloud systems and maintenance is generally notified to the user without much impact.

Also, as per computing: CAP theorem (Consistency / Availability / Partition tolerance)

Here, we can achieve only two out of the three. At scale, a cloud-first architecture, full of such database systems is a very optimal approach.

For most organizations, the capacity to audit and be able to see deficiencies and flaws in their digital strategy is of vital importance. Digital systems are pivotal to every decision making inside organizations. These need precise information on how to audit their systems. Most cloud service providers have to meet exacting standards. Thus their processes and systems go through various industry standard audits. Clients benchmark these for the end users. Sample cloud audit reports can be viewed here.

Cons of Cloud Providers

There are significant drivers for a cloud-first architecture. But they do come with several caveats as well, which sometimes are non-suitable for individuals or organizations.

1. Ballooning Costs Due To Pricing Changes

While a cloud-first architecture can be cost-effective, cloud providers roll out a lot of new cloud services with certain costs structures for the user base. As they become more and more popular, the cost matrix for these is re-configured to suit the cloud providers own profitability. Most cloud services have built-in cost redundancies, they are cost-effective to a certain scale and size. But if you use them at mega-scale, they will start to become more and more expensive for the end user. These cloud services usually have caps on mega-scale.

Also, there are lots of services which are cost-effective in one region while in other regions they are expensive. Many AWS services, from a deployment standpoint, are cheaper in the US region as compared to other geographies. Although it is changing with adoption and setting up of newer centers.

2. Service Termination/Depreciation

A reason for a cloud provider to keep a service running and maintaining it is due to its high usage and profitability. Sometimes over a long period of time ( 3-8 years), a service becomes unviable for a provider to keep maintaining and is no longer cost-effective. In such cases, the cloud providers start to depreciate those services in a phased manner. They also push back their long tail clients to migrate to a different service ( similar in nature ). They assist you with migration, but such a move can cause a lot of technical and customer challenges. A small client who isn’t able to migrate to these new services can suffer. If your organization is too dependent on a particular service and the provider deprecates it. It would be of a real shame for you to have to move to something else when you have something running on the existing system.

3. Downtime

One of the major disadvantages of having a cloud-only architecture is the downtime. This is the time taken by the cloud provider to do maintenance on their services. No matter how robust, scalable and efficient a cloud system is, it requires effective maintenance and upgradation. Since this response is the job of the cloud provider, they issue notifications of downtime to the users as compared to when services would experience some inconsistencies or when they divert a specific amount of load/traffic (also consider checking out this career guide for cloud computing jobs). This can have an adverse impact on sensitive and real-time applications, which expect a 100% uptime. We can mitigate this by speaking to the provider. Sometimes these outages/downtimes can have the adverse business impact.

Example -: In 2017, AWS, the most reliable and used service S3, went down, causing a global panic.

Image Source: https://twitter.com/

There are scenarios to mitigate this, but sometimes these services, especially some new ones released, can experience large amounts of downtimes and issues.

4. Security / Privacy

While cloud systems have robust security and encryption/shields over conventional systems. Still, their infrastructure is subject to a wide variety of attacks. Some of the super secure one's guarantee no data/privacy loss but come at a hefty cost, but in some cases, there are attacks which can cause a lot of damage.

Due to the plug and play format of a cloud architecture, a malicious agent or hacker who has access to even a single private authentication key can cause widespread damage to your system. Many times users mishandle these AWS authentication keys and committed to open code base repositories like GIT etc.

A lot of hackers are running bots which detect this. They immediately start to perform these actions on your cloud architecture:

-

Launch instances,

-

Cause network DDOS attacks,

-

Install malware etc.

So even a small mistake by a non-vigilant developer can expose the entire digital infrastructure to attack. The nature of the cloud plug-and-play system can also sometimes launch such attacks.

5. Lack Of Flexibility (Too Many Constraints/Caveats)

Having a cloud ecosystem with a provider puts a constraint on a user/organization to stick to one provider for all the services. For example: if you set up your entire digital ecosystem on AWS, it ties you to laws and the cost of migration to another provider. For example, MS Azure or Google Cloud can be a very tedious and expensive proposition. The problem remains similar if you are on any other cloud provider as well.

Several services of AWS also have serious capacity constraints. For example, a very popular service like DynamoDB which in theory can store billions of key-value type objects. But takes its time to initialize and we need to do a capacity provisioning for this in advance. In the case of high peaks, there are service timeouts and errors which are system limitations. Almost all services have such limitations and the system design has to be around those systems.

Several large companies have deep pockets hence prefer to have in-house teams build up and maintain their infrastructure to overcome these constraints, also you have much better control over every part of the digital ecosystem when you have your own engineers managing everything for your applications.

You may also like: Top 15 Frequently Asked AWS Interview Questions and Answers

Organizations that use Cloud-based Solutions for their Business

Many organizations have moved to the cloud and are using the cloud to architect modern-day software solutions.

Examples:

Flipkart which started with a completely in-house solution and migrated to MS Azure for all its services and provides millions of Indian users with cloud-based e-commerce.

Delhivery: India's premier logistics startup is based out on AWS completely and has a serverless architecture where they deploy several microservices on AWS and manage everything on the cloud.

Snapdeal started with using AWS for its entire architecture and finally moved to build their own internal cloud called cirrus.

OLA uses AZURE for a lot of their own backend infrastructure and have deep partnerships with Microsoft to use their infrastructure.

More companies that are using the cloud services: Wipro, NDTV, Hotstar.com, Shaadi.com, Chumbak, Tata Motors, PRA CEO, Redbus, Big Bazaar etc.

How to set up Business/Software Solutions using Cloud?

A key component of any software system, be it mobile, desktop, or web-based today is to have a backend and a front end. These two systems speak to one another using REST HTTP verbs.

-

Setting up a Backend Web-based Rest API on the Cloud

A startup that needs to say set up a REST API on AWS, which they need to handle their backend systems can do so by integrating three systems.

Here are the steps:

-

Set up an API Gateway rest endpoint,

-

Set up the transformation logic on AWS lambda, and finally

-

Store the transformed object in S3/ DynamoDB / RDS (Relation DataStore: both MySQL/ pgSQL etc. available).

Any skilled engineer can do this in a day. Also, this system scales for over 10 million hits a day and is a robust way to build backend systems while keeping the costs to less than a dollar a day.

-

Front End/Client-side Angular Code

Deploying an angular front-end client-side code is super easy on a cloud-first architecture. Spawning ec2 instances (servers inside AWS) is a matter of clicks and setting up authentication and authorization is also super easy.

Here you can set up a [tomcat or jetty] on these servers or use a managed one and serve your client-side application across any geography and have access to deep analytics on the number of users etc., using AWS quick insights.

You may also like: Top 33 Frequently Asked DevOps Interview Questions and Answers

-

Hosting a Static App Website

One of the coolest things with AWS S3 architecture is, it allows to serve static ( content which doesn’t change) content from the file system. So you can put your data onto the S3 storage in folders, have an index.html file, serve them and create scalable static sites (as many as you like), right in a matter of hours, coupled with route S3 as DNS. Such ease of hosting a website for a startup or business is one of the reasons why a cloud is so popular with new startups.