Machine Learning For Dummies

Summary

Machine learning has been a topic of excitement and potential for optimizing and automating various tasks. However, frustration arises as many struggle to deploy models due to legacy infrastructure, lack of clear direction, and unrealistic expectations. The field is characterized by passion, confusion, and deceit, but it holds immense potential to revolutionize industries. Understanding the distinction between machine learning and artificial intelligence is crucial, and while AI aims to perform tasks typically done by humans, ML teaches computers how to learn. With the massive amount of data available, ML will shape various sectors, such as logistics, agriculture, finance, healthcare, and more, changing everything in its path.

Table of Content

Enter a quick search on Google News for ‘Machine Learning.’ The first article that pops up? ‘How to differentiate between AI, Machine Learning’ and ‘Deep Learning.’

https://unsplash.com/photos/EDZTb2SQ6j0

This reinforces a conversation I had with a seasoned IT consultant recently. He told me that two years ago there was a great deal of excitement about Machine Learning (ML). Everyone reveled in the potential to optimize and automate, well, pretty much everything. Today, he's concerned the conversation (on speakers circuits and at the executive level) was still ‘right; we need a plan, we need a data-centric culture.’

“Data scientists are frustrated because their models aren’t deploying.”

“Engineers are frustrated as they’re expecting to work around legacy infrastructure.”

“Architects are frustrated because they’re expected to wave a magic wand.”

“Business leaders are frustrated because projects aren’t delivering in time or budget.”

“Everyone is frustrated!”

https://unsplash.com/photos/r-enAOPw8Rs

A clear delivery roadmap is required. One with clarity over the end-to-end infrastructure. One outlining technology with clear human resource requirements. A clear, coordinated direction - a vision - from senior management.

According to him, two years later, this conversation hasn’t changed at all. People are still talking about the ‘how’ to the extent that there is not much ‘what’ being done at all. The result?

People are frustrated. And quite rightly, too. Machine learning has been dangled in front of curious data engineers and data scientists, but they're restricted. It's been dangled in front of clients as a solution to their efficiency or customer experience requirements. Companies haven't laid out any practical frameworks to achieve it (also consider checking out this perfect parcel of information for a data science degree).

https://unsplash.com/photos/af9gGbO0B5c

Welcome to Machine Learning!

In all seriousness, don’t be alarmed. Any new technology with this much potential to change everything, of course, has passion, confusion, and deceit. People who don’t know that they don’t know things will mislead people. Some people know they don’t know things and mislead people anyway. This is not a sign of Machine Learning, it is a signature of human beings and innovation. Don’t be alarmed by the ‘end-to-end’ frustration I speak of in the market but do be forewarned. Yes, you should still jump head first into the field because it will change almost every industry – but I’ll get to that…

https://unsplash.com/photos/hBsOq3RcndM

It’s natural that machine learning as a topic has this much energy and confusion. Imagine going back in time, visiting a people who had only ever seen horse-drawn carriages – then showing them the blueprint for a Ferrari. Would they immediately begin work on the Ferrari? Or would they stop, breathe, think through the practical implications of a car that can reach 100km/h in 2.9 seconds?! Would they say, ‘No! Stop! Let’s build the roads first!’? No, of course, they wouldn't. They’d want to build the shiny fast car first. They’d want it now. Then they’d also have a beautiful car sitting there useless. Without the roads, it can’t do what it was designed for.

Who knows, maybe people need a beautiful, fast car complete. Maybe having it sitting there useless helps them commit to the infrastructural overhaul. The cost involved is often huge and the risk significant. Both things kind of have to happen together. Think of it as the lack of charging ports for electric vehicles. Chicken or the egg? Ports or cars first? More charging ports will encourage more electric vehicle drivers. But they won’t install more charging ports until there are more electric vehicle drivers! Catch 22 often means that both the egg and the chicken have to awkwardly evolve at the same time. This is exactly what’s happening in the world of data (also consider checking out this career guide for data science jobs).

https://unsplash.com/

What you can expect out of a career in Machine Learning?

So, come with me, friends, allow me to lift the veil of what you can expect out of a career in machine learning. You’re joining the field at the perfect time: consider the first ‘Perceptron,’ an almost mechanical first example of ‘machine learning' – this was invented in 1957 (nearly 62 years ago!). Think about how many passionate mathematicians have gone before you. How many frustrated engineers and scientists and developers. They've worked tirelessly to progress the state of machine learning. All so that you, today, can stand on the shoulder of giants and build the future. And make no mistake. Self-writing applications will give people the ability to simply express their will to an application.

The reality of machine learning is that the concept was essentially stolen from brain studies. We knew brains were the most complex things in the universe because they were capable of the most complicated things. (There’s a distinction between complex and complicated I want to make in just a bit). Brains are never static, they are in a constant state of being ‘rewritten.’ It’s funny; it’s hard to talk about the brain without using computer jargon, and vice versa.

The reason for this is simple, after all – what is ‘computation’? At its most basic, computation is just 1) representation, 2) manipulation, 3) output. A brain is a type of computer, and our modern computers are a type of computer. You’re entering a world that will very likely produce the operating system of every other advanced technology on the planet. In some way shape or form, ML will be the brain to the hardware. This hardware could be quantum computers, sensory infrastructure, robotics, and nanotechnology. Probably many other things that don't exist yet! Also, across every industry, because everything needs to be ‘computed’ by something. As it stands, humans use the information to make decisions; but, in the future, it will just be the machines.

https://unsplash.com/photos/41Wuv1xsmGM

Machine Learning & Artificial Intelligence

Machine learning is often confused with ‘artificial intelligence.’ I was speaking to a man who Heads up a Data Science department. He described the basic distinction he makes between AI and ML.

-

Machine learning (ML) is teaching computers how to learn; whereas

-

Artificial Intelligence (AI) completes tasks typically the purview of a human.

I personally disagree. I think AI is a buzzword. I think AI is conceptual, just like the word intelligence - so it has no real concrete definition. In my opinion, ML is only the current mechanic that one day may lead to 'general AI' - if this is even possible.

https://unsplash.com/photos/qLiFcanSpuA

Nevertheless, he has a great point. Machine learning is clearly a sub-component of AI, and AI will likely perform tasks that people currently do. But - a big 'but.' What is ‘intelligence’? The field of psychology has tormented over the exact nature of intelligence and made very little progress. Oh, we know what ‘normal’ is (a ‘mean’ and a ‘standard deviation’) against a specific test. But does this test actually measure 'intelligence'? Sure, we can use this ‘normal’ to gauge what ‘abnormal’ is to generate what we now call IQ scores. The reality of intelligence is that it is an incredibly complex and multivariate phenomenon. It's also highly subjective (at least until the brain is fully mapped and understood - and likely even then). My opinion is that the best definition of intelligence from psychology is 'the ability to react to new situations.'

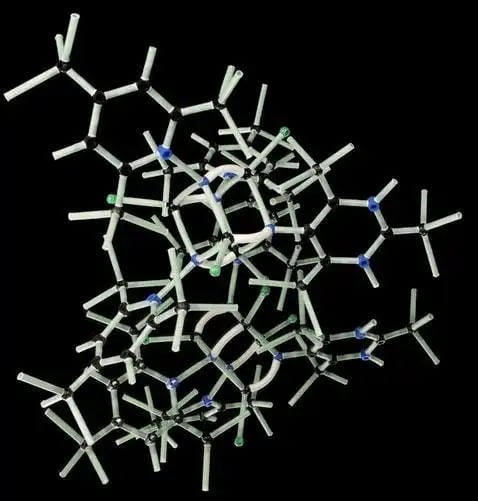

Right, now for that distinction between ‘complex’ and ‘complicated.’ A complicated thing is something that is true, well, complicated. It has a few patterns, maybe the appearance of randomness. Maybe it's just exquisite design. As a rule of thumb, it looks impossible to replicate. Complexity, on the other hand, is different. Complex things can look complicated at first glance, but in fact, are simply the result of very simple things compiled.

https://unsplash.com/photos/RHcvA5zYoVg

Take Lego, for example – just the original standard block. In principle, you could build the galactic empire death ship. It’s many simple Lego blocks put together according to a straightforward blueprint. Life is the same, all of life’s ‘complicated’ stuff can be broken down to atoms (or even further – strings in string theory). You can build remarkably complicated constructions from basic things: the entire universe is made out of atoms. Up a layer of ‘extraction’ and suddenly you have an array of molecules, and so on.

The fact of all life is that, really, nothing is complicated. Everything is simply complex. Everything can be broken down. You can break anything down into the basic ingredients, and you can mathematically calculate the rules. Everything can be reverse-engineered once you understand the rules. It also means that you can build staggering, extraordinary, beautiful things out of very simple pieces.

https://unsplash.com/photos/_y4LGVTeBwQ

You will find this mentality useful when approaching machine learning and you will find this course gives you those 'Lego pieces.'

Jumping back to the definition of intelligence – the ability to react to novel situations. Consider self-driving cars, a good example. Talks by both Waymo at Standford and Tesla in various podcasts emphasize one fundamental thing: ‘the long tail’ of events. It’s not enough to train systems specific things. You have to teach the machine 'comprehension'. The isolated categorization is not 'understanding' and would be a danger on our roads. Why? Because of just one outlier – one ‘weird’ situation – means somebody’s life at the mercy of a 'black box.' As Elon Musk said: you basically must teach the car to ‘understand,’ not just see. Even if statistically speaking the car still drives better than a human being, for some reason the fact that it’s a ‘machine’ changes everything. The standard is held much higher because people hate the idea of an error without a human touch. Weird, huh?

As a funny aside, I heard Rory Sutherland - behavioral economics and advertising guru - jest that people will start messing with cars. They'll draw lines on roads, use mirrors to confuse them and so on, to encourage errors. That's what humans do! So, we have to teach our artificial brains to understand when these and other unusual events are happening.

https://www.spectator.co.uk/2017/12/are-driverless-cars-really-the-future/

On that note, now for a comment on Artificial Intelligence, the holy grail. The buzzword. Irrationality was always a signature of human endeavor. We do the most staggeringly daft things sometimes, for the dumbest reasons, yet would gladly repeat it. Think of ‘love’ or ‘respect.’ Are you making truly rational decisions? I ask you this: is human level ‘intelligence’ really what the field of machine learning should be aspiring for? Whether it’s attainable is beside the point, the last thing I want is a car that might get a bit grumpy when I ask it to start in the early morning. Or a toaster that doesn’t like how hard I pushed down the toast, taking revenge by burning my bread. And that's before the question 'would it want to be alive?'.

Now let's consider some of the potentials for machine learning. One main reason for ML’s rise in public consciousness today is the amount of data available on the planet. I read a statistic recently that mentioned that the quantity of data created up to 2003 is created every two days! To be honest, that sounds low; I’ve also read that there are 2.5 quintillion bytes of data created daily. Whatever, lots of data and more data = better machine learning. (There are also ways of manufacturing 'real data' in simulations...) With the internet of things, this again explodes. With every toaster and fridge beginning to contribute to our existing maelstrom of tweets and pings. We use this data to train models, for this is at heart machine learning, and these models essentially mold off the real world (data) (Here's the perfect parcel of information to learn data science). Then these models are deployed as very small amounts of code representing vast amounts of incidences. Then, models begin to be used to make models (on models, on models…).

https://unsplash.com/photos/t0SlmanfFcg

Anything that relies on learned experience will be changed forever. Logistics. Crop management and agriculture in general. Investments in the stock market - heck will there even be a stock market as we know it today? Will ML combine with blockchain? Will this technology creates a decentralized 'brain' that allocates all resources on our planet? The ultimate utilitarian future is half obvious and half terrifying. Fraud protection in payment processing is already powered by ML algorithms. Same in digital media targeting and customer segmentation. Healthcare could be next, with tailored medicine and preemptive diagnoses. Pharmaceutical production…everything. Machine learning is only going to change everything, and the only constant in machine learning is change.